NUMA multiprocessing architecture.Ī potential drawback with a NUMA system is increased latency when a CPU must access remote memory across the system interconnect, creating a possible performance penalty. Thus, NUMA systems can scale more effectively as more processors are added. This approach is known as non-uniform memory access, or NUMA. System interconnect, so that all CPUs share one physical address space. Virtually all modern operating systems-including Windows, macOS, and Linux, as well as Android and iOS mobile systems-support multicore SMP systems.Īdding additional CPUs to a multiprocessor system will increase computing power however, the concept does not scale very well, and once we add too many CPUs, contention for the system bus becomes a bottleneck and performance begins to degrade.Īn alternative approach is instead to provide each CPU (or group of CPUs) with its own local memory that is accessed via a small, fast local bus.

A dual-core design with two cores on the same chip. one chip with multiple cores uses significantly less power than multiple single-core chips. Multicore systems can be more efficient than multiple chips with single cores because on-chip communication is faster than between-chip communication. In multiprocessors multiple computing cores reside on a single chip. A multiprocessor system of this form will allow processes and resources-suchĪs memory-to be shared dynamically among the various processors and can lower the workload variance among the processors. These inefficiencies can be avoided if the processors share certain data structures. Since the CPUs are separate, one may be sitting idle while another is overloaded, resulting in inefficiencies. However, all processors share physical memory over the system bus. Each CPU processor has its own set of registers, as well as a private-or local- cache. in that each processor performs all tasks, including operating-system functions and user processes. The most common multiprocessor systems use symmetric multiprocessing (SMP). This overhead, plus contention for shared resources, lowers the expected gain from additional processors. When multiple processors cooperate on a task, a certain amount of overhead is incurred in keeping all the parts working correctly. By increasing the number of processors, we expect to get more work done in less time.

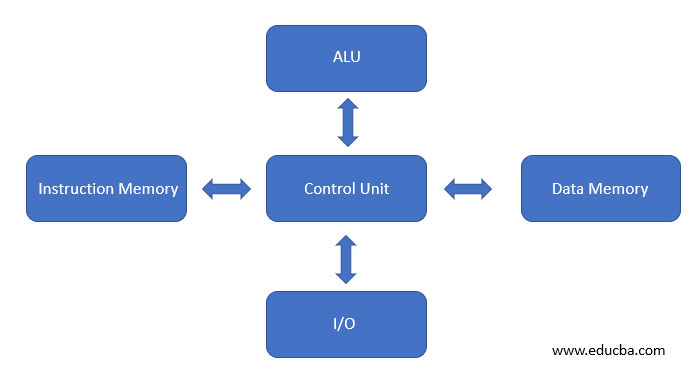

The advantage of using multiprocessor system is that it increases the work done in a given period of time. The processors share the computer bus and sometimes the clock, memory, and peripheral devices. such systems have two (or more) processors, each with a single-core CPU. Our modern Computers are multiprocessor Systems. If there is only one general-purpose CPU with a single processing core, then This arrangement relieves the main CPU of the overhead of disk scheduling. For example: a disk-controller microprocessor receives a sequence of requests from the main CPU core and implements its own disk queue and scheduling algorithm. Sometimes they do the task given by the operating systems. They only run a limited instruction set and do not run processes. These systems come in the form of device-specific processors, such as disk, keyboard, and graphics controllers. It is capable of executing a general purpose instruction set, including instructions from processes Core is the component that executes instructions and registers for storing data. Older days computer contains a single processor containing one CPU with a single processing core. Which can be categorize according to the number of general-purpose A computer system can be organized in different ways,

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed